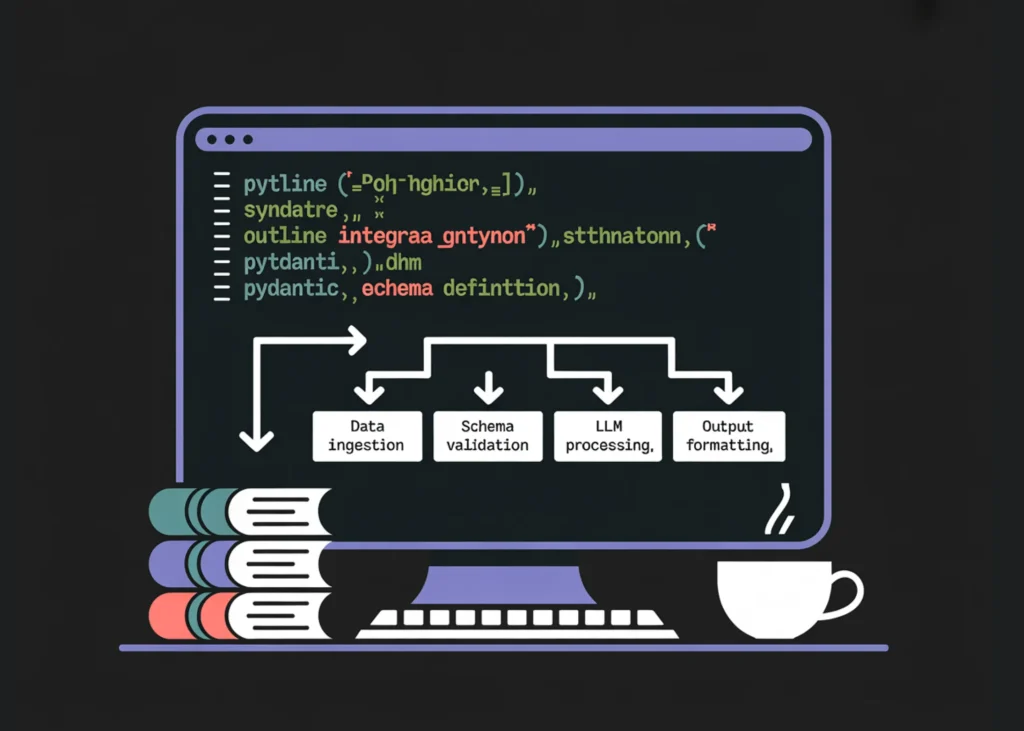

How to Build Type-Safe, Schema-Constrained, and Function-Driven LLM Pipelines Using Outlines and Pydantic

In this tutorial, we build a workflow using Outlines to generate structured and type-safe outputs from language models. We work with typed constraints like Literal, int, and bool, and design prompt templates using outlines.Template, and enforce strict schema validation with Pydantic models. We also implement robust JSON recovery and a function-calling style that generates validated arguments and executes Python functions safely. Throughout the tutorial, we focus on reliability, constraint enforcement, and production-grade structured generation.

subprocess.check_call([sys.executable, “-m”, “pip”, “install”, “-q”,

“outlines”, “transformers”, “accelerate”, “sentencepiece”, “pydantic”])

import torch

import outlines

from transformers import AutoTokenizer, AutoModelForCausalLM

from typing import Literal, List, Union, Annotated

from pydantic import BaseModel, Field

from enum import Enum

print(“Torch:”, torch.__version__)

print(“CUDA available:”, torch.cuda.is_available())

print(“Outlines:”, getattr(outlines, “__version__”, “unknown”))

device = “cuda” if torch.cuda.is_available() else “cpu”

print(“Using device:”, device)

MODEL_NAME = “HuggingFaceTB/SmolLM2-135M-Instruct”

tokenizer = AutoTokenizer.from_pretrained(MODEL_NAME, use_fast=True)

hf_model = AutoModelForCausalLM.from_pretrained(

MODEL_NAME,

torch_dtype=torch.float16 if device == “cuda” else torch.float32,

device_map=”auto” if device == “cuda” else None,

)

if device == “cpu”:

hf_model = hf_model.to(device)

model = outlines.from_transformers(hf_model, tokenizer)

def build_chat(user_text: str, system_text: str = “You are a precise assistant. Follow instructions exactly.”) -> str:

try:

msgs = [{“role”: “system”, “content”: system_text}, {“role”: “user”, “content”: user_text}]

return tokenizer.apply_chat_template(msgs, tokenize=False, add_generation_prompt=True)

except Exception:

return f”{system_text}\n\nUser: {user_text}\nAssistant:”

def banner(title: str):

print(“\n” + “=” * 90)

print(title)

print(“=” * 90)

We install all required dependencies and initialize the Outlines pipeline with a lightweight instruct model. We configure device handling so that the system automatically switches between CPU and GPU based on availability. We also build reusable helper functions for chat formatting and clean section banners to structure the workflow.

s = s.strip()

start = s.find(“{“)

if start == -1:

return s

depth = 0

in_str = False

esc = False

for i in range(start, len(s)):

ch = s[i]

if in_str:

if esc:

esc = False

elif ch == “\\”:

esc = True

elif ch == ‘”‘:

in_str = False

else:

if ch == ‘”‘:

in_str = True

elif ch == “{“:

depth += 1

elif ch == “}”:

depth -= 1

if depth == 0:

return s[start:i + 1]

return s[start:]

def json_repair_minimal(bad: str) -> str:

bad = bad.strip()

last = bad.rfind(“}”)

if last != -1:

return bad[:last + 1]

return bad

def safe_validate(model_cls, raw_text: str):

raw = extract_json_object(raw_text)

try:

return model_cls.model_validate_json(raw)

except Exception:

raw2 = json_repair_minimal(raw)

return model_cls.model_validate_json(raw2)

banner(“2) Typed outputs (Literal / int / bool)”)

sentiment = model(

build_chat(“Analyze the sentiment: ‘This product completely changed my life!’. Return one label only.”),

Literal[“Positive”, “Negative”, “Neutral”],

max_new_tokens=8,

)

print(“Sentiment:”, sentiment)

bp = model(build_chat(“What’s the boiling point of water in Celsius? Return integer only.”), int, max_new_tokens=8)

print(“Boiling point (int):”, bp)

prime = model(build_chat(“Is 29 a prime number? Return true or false only.”), bool, max_new_tokens=6)

print(“Is prime (bool):”, prime)

We implement robust JSON extraction and minimal repair utilities to safely recover structured outputs from imperfect generations. We then demonstrate strongly typed generation using Literal, int, and bool, ensuring the model returns values that are strictly constrained. We validate how Outlines enforces deterministic type-safe outputs directly at generation time.

tmpl = outlines.Template.from_string(textwrap.dedent(“””

<|system|>

You are a strict classifier. Return ONLY one label.

<|user|>

Classify sentiment of this text:

{{ text }}

Labels: Positive, Negative, Neutral

<|assistant|>

“””).strip())

templated = model(tmpl(text=”The food was cold but the staff were kind.”), Literal[“Positive”,”Negative”,”Neutral”], max_new_tokens=8)

print(“Template sentiment:”, templated)

We use outlines.Template to build structured prompt templates with strict output control. We dynamically inject user input into the template while preserving role formatting and classification constraints. We demonstrate how templating improves reusability and ensures consistent, constrained responses.

class TicketPriority(str, Enum):

low = “low”

medium = “medium”

high = “high”

urgent = “urgent”

IPv4 = Annotated[str, Field(pattern=r”^((25[0-5]|2[0-4]\d|[01]?\d\d?)\.){3}(25[0-5]|2[0-4]\d|[01]?\d\d?)$”)]

ISODate = Annotated[str, Field(pattern=r”^\d{4}-\d{2}-\d{2}$”)]

class ServiceTicket(BaseModel):

priority: TicketPriority

category: Literal[“billing”, “login”, “bug”, “feature_request”, “other”]

requires_manager: bool

summary: str = Field(min_length=10, max_length=220)

action_items: List[str] = Field(min_length=1, max_length=6)

class NetworkIncident(BaseModel):

affected_service: Literal[“dns”, “vpn”, “api”, “website”, “database”]

severity: Literal[“sev1”, “sev2”, “sev3”]

public_ip: IPv4

start_date: ISODate

mitigation: List[str] = Field(min_length=2, max_length=6)

email = “””

Subject: URGENT – Cannot access my account after payment

I paid for the premium plan 3 hours ago and still can’t access any features.

I have a client presentation in an hour and need the analytics dashboard.

Please fix this immediately or refund my payment.

“””.strip()

ticket_text = model(

build_chat(

“Extract a ServiceTicket from this message.\n”

“Return JSON ONLY matching the ServiceTicket schema.\n”

“Action items must be distinct.\n\nMESSAGE:\n” + email

),

ServiceTicket,

max_new_tokens=240,

)

ticket = safe_validate(ServiceTicket, ticket_text) if isinstance(ticket_text, str) else ticket_text

print(“ServiceTicket JSON:\n”, ticket.model_dump_json(indent=2))

We define advanced Pydantic schemas with enums, regex constraints, field limits, and structured lists. We extract a complex ServiceTicket object from raw email text and validate it using schema-driven decoding. We also apply safe validation logic to handle edge cases and ensure robustness at production scale.

class AddArgs(BaseModel):

a: int = Field(ge=-1000, le=1000)

b: int = Field(ge=-1000, le=1000)

def add(a: int, b: int) -> int:

return a + b

args_text = model(

build_chat(“Return JSON ONLY with two integers a and b. Make a odd and b even.”),

AddArgs,

max_new_tokens=80,

)

args = safe_validate(AddArgs, args_text) if isinstance(args_text, str) else args_text

print(“Args:”, args.model_dump())

print(“add(a,b) =”, add(args.a, args.b))

print(“Tip: For best speed and fewer truncations, switch Colab Runtime → GPU.”)

We implement a function-calling style workflow by generating structured arguments that conform to a defined schema. We validate the generated arguments, then safely execute a Python function with those validated inputs. We demonstrate how schema-first generation enables controlled tool invocation and reliable LLM-driven computation.

In conclusion, we implemented a fully structured generation pipeline using Outlines with strong typing, schema validation, and controlled decoding. We demonstrated how to move from simple typed outputs to advanced Pydantic-based extraction and function-style execution patterns. We also built resilience through JSON salvage and validation mechanisms, making the system robust against imperfect model outputs. Overall, we created a practical and production-oriented framework for deterministic, safe, and schema-driven LLM applications.

Check out Full Codes here. Also, feel free to follow us on Twitter and don’t forget to join our 120k+ ML SubReddit and Subscribe to our Newsletter. Wait! are you on telegram? now you can join us on telegram as well.