Agentic AI’s governance challenges under the EU AI Act in 2026

Several steps can be taken to alleviate high levels of risk, and of these, the ones that stand out for consideration include agent identity, comprehensive logs, policy checks, human oversight, rapid revocation, the availability of documentation from vendors, and the formulation of evidence for presentation to regulators.

There are several options decision makers can consider that will help create the record of activities undertaken by agentic systems. For example, a Python SDK (software development kit), Asqav, can sign each agent’s action cryptographically and link all records to an immutable hash chain – the type of technique that’s more associated with blockchain technology. If someone or something changes or removes a record, verification of the chain fails.

For governance teams, using a verbose, centralised, possibly-encrypted system of record for all agentic AIs is a measure that provides data well beyond the scattered text logs produced by individual software platforms. Regardless of the technical details of how records are made and kept, IT leaders need to see exactly where, when, and how agentic instances are acting throughout the enterprise.

Many organisations fail at this first step in any recording of automated, AI-driven activity. It’s necessary to keep a registry of every agent in operation, with each uniquely identified, plus records of its capabilities and granted permissions. This ‘agentic asset list’ ties neatly into the requirements of the EU AI Act’s article 9, which states:

Article 9: For high-risk areas, AI risk management has to be an ongoing, evidence-based process built into every stage of deployment (development, preparation, production), and be under constant review.

Furthermore, decision-makers need to be aware of the Act’s Article 13:

High-risk AI systems have to be designed in such a way that those deploying them can understand a system’s output. Thus, an AI system from a third-party must be interpretable by its users (not an opaque code blob), and should be supplied with enough documentation to ensure its safe and lawful use.

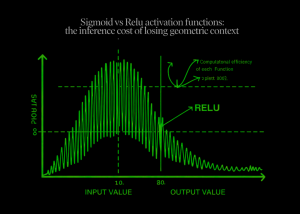

This requirement means the choice of model and its methods of deployment are both technical and regulatory considerations.